Best Consumer-Grade AI GPUs of 2025

The AI boom has shifted into overdrive in 2025, and consumer GPUs are no longer just for gamers. Nvidia and AMD have turned their latest graphics cards into miniature AI workhorses, cramming them with faster memory, specialized tensor hardware, and lower-precision compute modes that cater to generative AI, LLM inference, and deep learning training.If you’re running Stable Diffusion, fine-tuning LLaMA, or spinning up transformer-based workflows locally, these are the GPUs worth your attention right now.

1.Nvidia RTX 5090

he GeForce RTX 5090 leads the current generation with unmatched AI performance. Nvidia built this Blackwell-based card with 32GB of GDDR7 memory, delivering 1.79TB/s of bandwidth. It runs on 5th-gen Tensor Cores and supports new data formats like FP4 and FP8, which help speed up inference and training. The card delivers up to 838 TOPS of INT8 performance and outpaces the 80GB A100 in LLM benchmarks, hitting over 5,800 tokens per second with optimized models.Stable Diffusion runs significantly faster on the 5090 compared to the 4090. Early tests show up to 2x speed increases when using FP4. With a TDP of 575W, the card requires high-end cooling and power delivery, but for AI developers working locally, the performance gains justify the size and heat.

2. Nvidia RTX 5080

RTX 5080 delivers many of the same AI features as the 5090 at a lower price. It uses 16GB of GDDR7 memory and pushes 960GB/s of memory bandwidth. Its 5th-gen Tensor Cores support FP4 and FP8 operations, and the card delivers around 450 TOPS for INT8 inference. It runs at 360W and has a smaller CUDA core count than the 5090 but retains strong generative AI performance.In practice, the 5080 performs 10-20% better than the RTX 4080 Super in AI benchmarks. It also outperforms the RTX 4090 in certain inference tasks where faster memory and newer tensor features come into play. This makes the 5080 a solid option for creators running LLMs or diffusion models that fit within 16GB of VRAM.

3. Nvidia RTX 4090

Nvidia RTX 4090 continues to serve as the gold standard for AI workloads among mainstream users. It ships with 24GB of GDDR6X memory, delivering roughly 1TB/s of bandwidth. The card features 4th-gen Tensor Cores and supports FP16 and BF16 operations. It delivers over 330 FP16 TFLOPS and performs well in both training and inference.LLMs with up to 30 billion parameters can run on the 4090 with 8-bit quantization. Stable Diffusion and other image generation models also benefit from the card’s high compute performance. Despite newer cards on the market, the RTX 4090 remains a reliable option for AI professionals and researchers.

4. Nvidia RTX 4080 Super & 4070 Ti Super

Nvidia launched the 4080 Super and 4070 Ti Super in early 2024. These Ada Lovelace refreshes improved on memory bandwidth and AI performance. The 4080 Super uses 16GB of GDDR6X and offers around 736GB/s bandwidth. It includes 4th-gen Tensor Cores and delivers up to 418 INT8 TOPS. The card consumes 320W and remains efficient for training and inference at mid-range batch sizes.The 4070 Ti Super also received 16GB of memory and an upgraded bus. It delivers about 353 INT8 TOPS and consumes 285W. While it cannot match the 4090 or 5080 in compute throughput, it performs well in local LLM inference and image generation tasks, making it a strong choice for budget-conscious developers.

5.AMD Radeon RX 9070 XT

AMD’s RDNA 4-based RX 9070 XT introduces significant AI upgrades to the Radeon family. The card includes second-generation AI accelerators, support for FP8, and improved ray tracing. It ships with 16GB of GDDR6 memory and reaches 640GB/s of memory bandwidth. Its estimated FP32 compute performance is around 48.7 TFLOPS.The RX 9070 XT provides around 389 INT8 TOPS. It runs at 300W and supports ROCm on Linux, allowing compatibility with PyTorch and TensorFlow. The card is best suited for AI-enhanced gaming, FSR4 upscaling, and small-scale inference tasks.

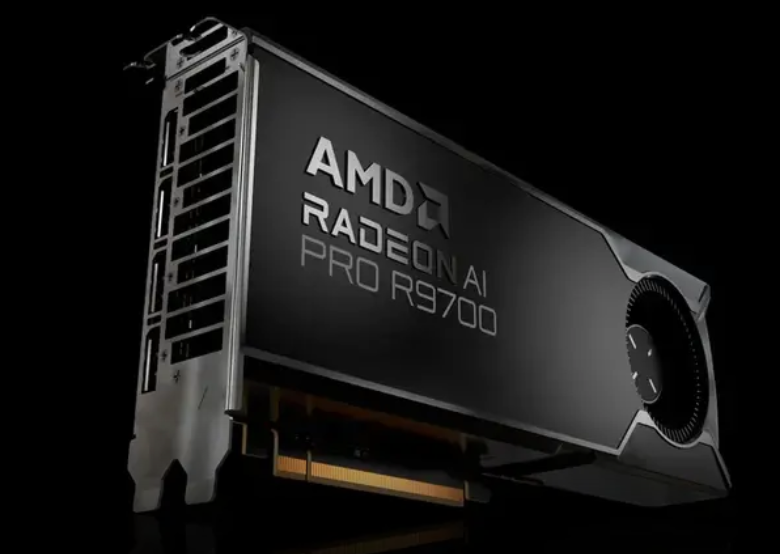

6. AMD Radeon AI Pro R9700

AMD launched the Radeon AI Pro R9700 as a workstation-grade GPU aimed at AI developers and creative professionals. It comes with 32GB of GDDR6 memory and uses the same RDNA 4 architecture as the 9070 XT but with double the compute units. The card provides around 383 INT8 TOPs, supports FP8 operations, and runs at 300W.The R9700 supports ROCm on both Linux and Windows, making it AMD’s most developer-friendly card yet. Its large VRAM buffer allows for fine-tuning and inference of LLMs beyond the capacity of the RX series. It performs well in multi-GPU setups and is positioned as a cost-effective alternative to Nvidia’s workstation-class cards.

INFORMATION CREDIT : Google

Follow For More : @TESVIPER10

I QUEST ON AND ON 💛 🖤

Please sign in

Login and share